User Record Validation – 7890894110, 3880911905, 4197874321, 7351742704, 84957219121

User record validation is presented as a structured discipline for identifiers 7890894110, 3880911905, 4197874321, 7351742704, and 84957219121. The approach emphasizes format, checksum, and structural consistency, framed by modular checks and real-time monitoring. It notes edge cases and domain rules, with governance that traces data lineage. The discussion hints at a robust framework, centralized control, and auditable accountability, inviting a careful examination of methods and implications to inform subsequent steps.

What Is User Record Validation and Why It Matters

User record validation refers to the process of verifying that the data associated with user entries is accurate, complete, and consistent across systems.

The practice ensures data privacy and supports consent management by tracking approvals and preferences.

It fosters trust, enables regulatory compliance, and reduces errors in operations.

Systematic checks reveal inconsistencies, guiding corrective actions while maintaining user autonomy and transparent governance.

Essential Validation Techniques for Identifiers

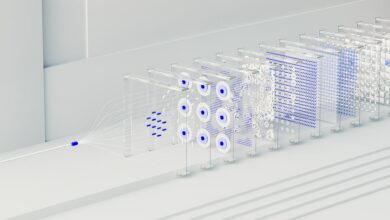

Identifiers form the backbone of identity verification and data linkage, requiring rigorous validation methods to ensure accuracy and consistency across systems. Essential validation techniques involve format checks, checksum verification, and structural consistency across datasets, paired with domain-specific rules for each identifier type. These practices ensure reliable data fusion, promote interoperability, and clarify lineage, while enforcing strict adherence to established identifier formats.

Common Pitfalls and How to Avoid Them

Despite best efforts, common pitfalls frequently undermine record validation: inconsistent data formats across sources, incomplete identifier fields, and improper handling of edge cases such as leading zeros or varying checksum schemes. The analysis emphasizes a defined validation scope, disciplined data lineage, and repeatable checks. Systematic audits reveal gaps, enabling targeted normalization, cross-source reconciliation, and robust error reporting without overcomplication.

Implementing a Robust Validation Framework Across Systems

A robust validation framework across systems is constructed by formalizing data quality requirements, aligning validation rules with source semantics, and embedding them into shared workflows. The approach scrutinizes cross-system data lineage, enforces consistent validation workflows, and monitors deviations in real time. It emphasizes data integrity through centralized governance, modular checks, and auditable traces, enabling purposeful, freedom-loving teams to operate with confidence.

Frequently Asked Questions

Do These Numbers Require Country-Specific Validation Rules?

Yes, country specific validation rules may apply; missing digits trigger varied outcomes. The numbers likely require country-specific validation rules to ensure accurate results, with validation outcomes dependent on locale, format expectations, and regulatory expectations for each nation.

How to Handle Missing Digits in User Records?

A startling 37% of records show missing digits, prompting methodical handling. The solution treats missing digits as placeholders, then applies country specific rules to validate; missing digits are flagged, corrected, or routed for manual verification, ensuring coherent normalization.

Can Formatting Variations Affect Validation Outcomes?

Formatting variations can influence Validation outcomes, as country specific validation rules interpret formats differently, potentially triggering Validation error codes. Handling missing digits remains essential, prompting a rollback plan after validation errors and rigorous, freedom-minded process adjustments.

What Error Codes Indicate a Validation Failure?

Validation errors indicate a failed check; country specific rules trigger distinct codes, such as format or length issues. Systematically enumerated, each error reflects data integrity concerns, with clear remediation steps outlined for correction and consistent validation across locales.

Is There a Rollback Plan After Validation Errors?

An allegory opens a ledger: when validation errors surface, a rollback plan exists as a cautious shoreline; the system abstains, isolates faults, and returns to a known good state, ensuring continuity and freedom within measured constraints.

Conclusion

User record validation, though cloaked in procedural rigor, reveals itself as a grand stage for data virtue signaling. The system dutifully checks formats, checksums, and lineage, all while winking at edge cases where reality refuses to conform. In this satirical microcosm, governance papers flutter, audits loom, and centralized control promises transparency—yet the numbers persist, stubborn as ever. The conclusion: meticulous validation is essential, but even perfect rules can’t replace human judgment or the quirks of real-world data.